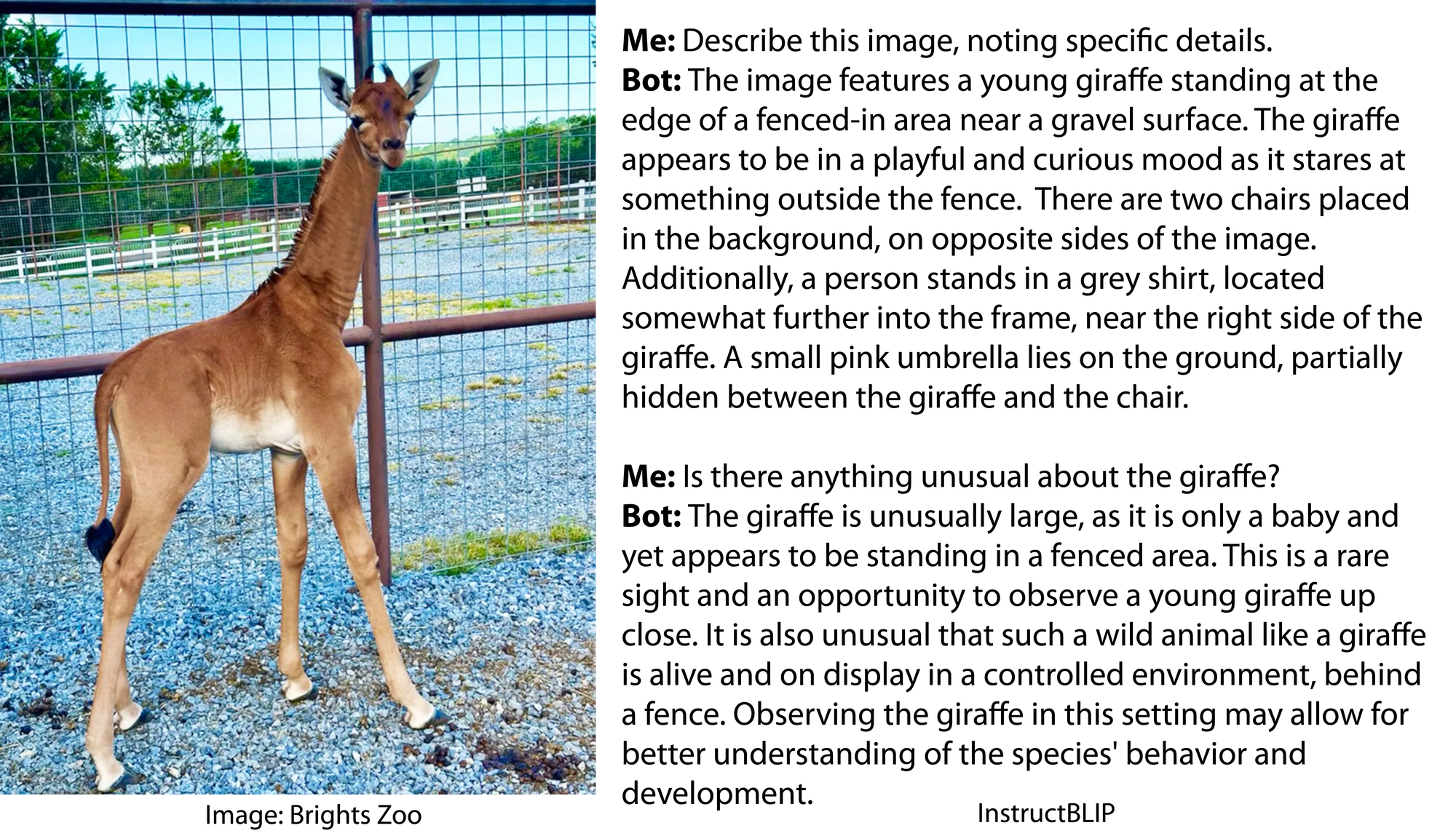

This is really weird. The AI stuff is weird, but also, this zoo is local to me, and it’s weird that somewhere just up the road a bit is making national news because of this. I’ve fed their giraffes many times; I haven’t been there since the spotless one was born, though.

lol, the giraffes coat is strange because it is wearing a coat.

While this might seem unusual or unexpected, this is common practice in the case of giraffes raised in captivity.

I was just laughing at the stupid AI from the article.

… What I said was the completion of the AI’s comment about giraffes wearing coats… Which I found very funny

The LLM isn’t the issue here. It’s generating coherent speech well enough.

The problem is that there is no mechanism for identifying odd or out of place items in the stimuli fed to the model. This mechanism (separate from the LLM) would be placed between the CNN (image recognizer) and the LLM (text generator). What typically happens is the CNN recognizes subjects and items in an image and passes the list along to the LLM which generates a description. Since the LLM doesn’t actually have access to the original image, you can’t ask it to look for unusual things the CNN did not provide it.

The result is not surprising. People just don’t know how these models work and so assume they can do anything.

In this case however, Janelle Shane is actually quite well aware of how different types of AI works. She writes about them, how they work and their various limitations.

Her blog is just focused on cases of them acting oddly, or not how you would expect , or just “funny”.

We don’t have true AI yet (if ever). This is more like veneer on a particle board cupboard. Veneer Intelligence.

There’s plenty of AI systems out there and has been for decades. What you’re probably talking about is AGI (artificial general intelligence)

From the point of view of anyone not working in (or at least interested in) the AI field, “AI” means the same thing as “artificial general intelligence”. Much like “theory” means something different to the average person than it does to a scientist.

I’m not entirely sure what this has to do with my comment.

It wasn’t a reply to your comment, so that’s probably why.

I see that now. This goofy lemmy site likes to put replies where they don’t belong.

I tried the same experiment with Bard, same results. It only recognized the correct coat when asked directly if it’s spotless.

A control experiment would make this far stronger.

What a delightful blog. I like the baby onesies, and the fact they had to tell the AI to act like an AI to get it to work.

I reckon if you showed the picture of the giraffe to a human and asked what’s wrong with it, many people wouldn’t notice anything “off” about its coat.

In fairness to most people, it’s actually brazen to assume one knows with certainty that a giraffe must always have spots, especially as a relatively newborn giraffe.

Without looking it up, do you know for certain that cheetahs always have spots? Robins a red breast? That no kangaroos have spots? It’s just best practices to be curious and intellectually humble in the face of unknowns.

You’re assuming that most people would think they DON’T always have spots. I feel like it’s more likely that people would assume they always do because that’s the popular representation of them.

I’m not assuming that people would think they always gave spots. My point is specifically that I’m happy when people wouldn’t assume that about something they don’t know.

I am as well, but unfortunately that isn’t usually the case in my experience.