Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid!

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post, there’s no quota for posting and the bar really isn’t that high

The post Xitter web has spawned soo many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

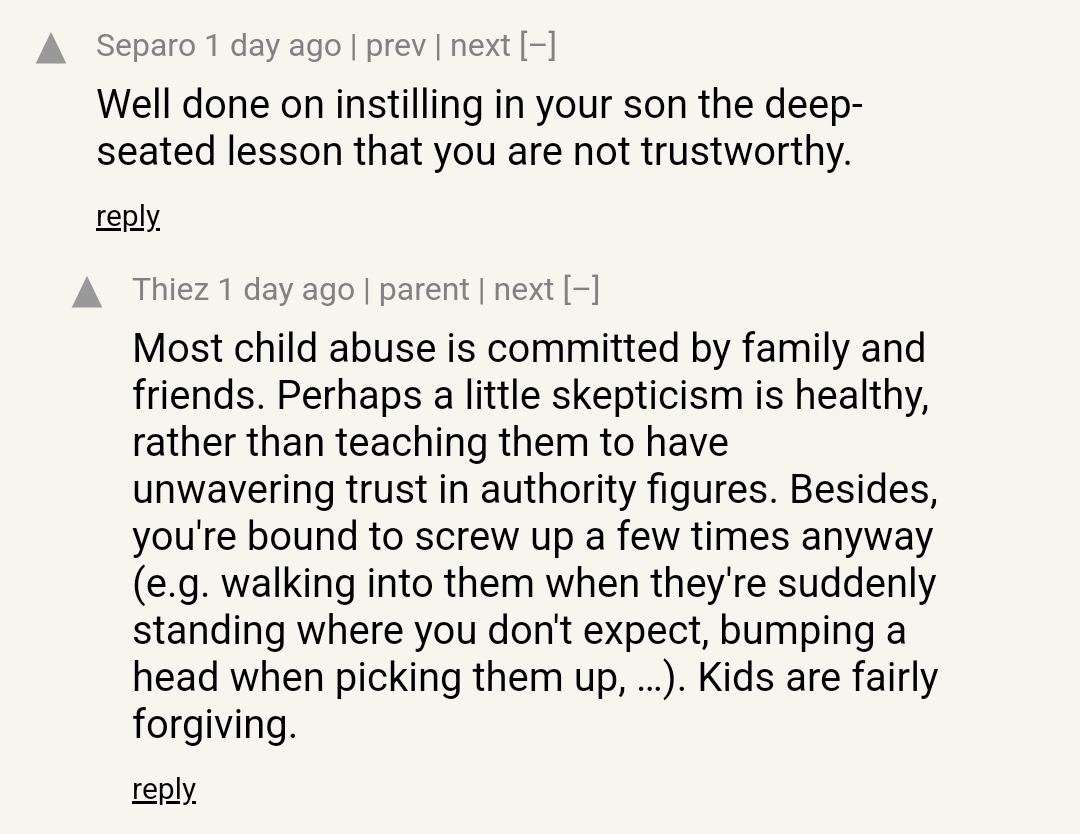

tired: learning from others through the wealth of experiences and resources that are widely available

wired: taking a “first principles” approach to endangering and traumatising your own child

I was at the apartment pool chatting with a friend who is a very advanced swimmer - the type that swims laps seemingly endlessly - and she asked “have you ever seen what would happen if [your two year-old son] fell in the pool?”. I said no, and then she suggested I try it so that I would at least know. So I picked him up and with no warning tossed him in. He immediately froze under water, arms and legs outstretched in literally stunned silence. I counted to 5 and pulled him out and he was trembling with fear.

At that point I realized that the time it takes for a kid to drown is one breath. That may be 3 seconds, may be 10 seconds.

HN Parenting Pro-tip: Chuck your kids into the pool, keep 'em sharp. Sure they might drown, but at least they won’t trust you after they make it back to land.

Oh my gahhhhd. “Most child abuse is committed by family and friends, so why not commit some abuse against your child?”

Bean Dad but instead of a can opener it’s swimming/not drowning

what the fuck

one of my few childhood memories is some dipshit fuckwad at a family-friends event who, upon learning that I hadn’t ever gone/tried swimming, decided all upon their lonesome to throw me into the pool

unfortunately I only recall the general event, and not who it was.

Something like that happened to me at a similar age (won’t go into details) and I never got over my dislike of going into pools and the ocean and learning to swim (which I never have).

Reminds me of this bloke’s approach to teaching swimming.

As somebody who fell into the deep end of a pool when I was younger of my own accord and took a decade or so to learn how to swim after that, I can say that’s the sort of thing that’s gonna fuck that kid up badly. Even today, I’m not entirely comfortable in the water.

no lies detected

Oh man, I’ve always wondered how the hiring process could become more impersonal and demeaning, now I know!

About a year ago I ran across something (a ZA startup, by the looks of it) that essentially pitched casting reels as an interview screener, and one of the highlights of the pitch was “they just send in a video clip introducing themselves, and you can tell whether they’re a cultural fit”.

No need for all that messy scheduling! No misunderstandings[0]! Totally fair[1]! Totally not abusable[2]!

Noped out of that so hard, on account of all the obvious reasons, but also because it immediately felt like it had ulterior motives/uses, such as dataset for ML training.

Imagine we’ll see some more of that.

[0] - that you get to do anything about

[1] - y’know, if you ignore the complete power imbalance and complete susceptibility to allowing hidden profiling

[2] - except for all the extremely obvious ways

Oof.

That being said, that’s not unheard of. I remember back when I was looking at scholarships and such that some places wanted video submissions, and I have friends in other industries that had to do the same. That in no way diminishes the shittiness of it all.

The ML angle seems novel though. Ostensibly you’d have a resume/cover letter that is effectively a set of tags for the video component, which I guess you could do sentiment analysis over? I guess the end game is to build a robot that can tell if you are a team player or not, and if you’d lie about it out of necessity for a job vs. eagerly for kool aid.

That strikes me as probably illegal, at least in the US (although I can’t find a better source, if someone can find where the EEOC says that it’d be appreciated.)

yes thanks for reminding me that the US is the center of the known universe and that all morality and allowances of anything ever should be modelled on events there. I almost forgot!

Using it for ML training would also be illegal in the EU under GDPR.

But this already exists. My colleague had to submit a video self-interview when applying to Goldman Sachs, the pillar of morality and ethics in the corporate world.

I didn’t intend to make any comment on morality. US law seems relevant given that it’s near-impossible to find one of these nonsense AI startups that isn’t either in the US or targeting US customers. Indeed, this one looks to be based in Los Angeles.

I literally stated that the thing I was referencing in my comment (that you replied to) was from ZA. but I appreciate your doubled-down US-centrism in your second reply. nice job! glad you can remind me again! I must’ve forgotten about it in the handful of hours since you last did it!

@froztbyte @Eiim “ZA” is not a country abbreviation that many united statians know, at least from my anecdotal experience.

Why would I want my interview experience to be “more gamified”?

So you can quick load your save state from the beginning of the interview and have another go at defeating the boss now you know their movement pattern?

you know I normally hate those job-simulator games but this just made me think there’s potentially a great indie game to be made in Interview Simulator

Is this by the same author as “Don’t Create the Torment Nexus”?

https://www.jwz.org/blog/2024/04/garry-tan-is-not-just-a-cryptofascist-hes-a-christofascist/

I’ll leave the sneer to jwz, but Jesus Fucking Christ indeed.

as I remarked elsewhere, I suspect this is one of those cases where they (tan/thiel/etc) are lying for power, not that it makes a practical difference about the shitty policies and ideas at the end of the day

This professor is arguing we need to regulate AI because we haven’t found any space aliens yet and the most conceivably explanation why is that they all wiped themselves out with killer AIs.

And hits some of the greatest hits:

- AI will nuke us all because the nuclear powers are so incompetent they’d hook the bombs up to Chat-GPT.

- AI will wipe us out with a killer virus for reasons

- We may not be adorable enough towards AI to prevent being vaporized even if we become cyborgs 🥺

- AI will wipe out an entire planet. Solution: we need people on a bunch of different planets and space-stations to study it “safely”

- Um actually space aliens would all be robots. Be free from your flesh prisons!

Zero mentions of global warming of course.

I kinda want to think that the author has just been reading some weird ideas. At least he put himself out there and wrote a paper with human sentences! It’s all aboard the AI hype train for sure, and constantly makes huge logical leaps, but it somehow doesn’t make me feel as skeezy as some of the other stuff on here.

Personally I think a unnoticed black swan event relating climate change is way more likely. ‘Whoops turns out that we thought 1.5C wasn’t that big a problem but this causes some feedback loop in the oceans killing them all, yes it caused more algae to grow, but these had less nutrition causing the fish to overeat and die, causing the algae to choke themselves out. Dead seas everywhere’.

Sad upvote.

Dont worry, as people are aware this might happen, it isn’t technically a black swan event. It is just a risk we are ignoring ;) (im not sure if this is actually a real risk, or that we really are ignoring it, im not a marine biologist).

I feel this makes it an unlikely great filter though. Surely some aliens would be less stupid than humanity?

Or they could be on a planet with far less fossil fuels reserves, so they don’t have the opportunity to kill themselves.

Think both ‘has the wisdom as a society to prevent unknown unknown side effects from industrialization from wrecking the ecosystem’ and ‘has almost no access to fossil fuels’ could also be pretty effective filters. In the latter case they prob would still be around but they wouldn’t spread in the universe so we wouldn’t hear from them which I think would satisfy the filter reqs.

“Sometimes I think the surest sign that intelligent life exists elsewhere in the Universe is that none of it has tried to contact us.”

Calvin and Hobbes, 8 November 1989

“They say the pollutants we dump in the air are trapping the Sun’s heat and it’s going to melt the polar ice caps! Sure, you’ll be gone when it happens, but I won’t! Nice planet you’re leaving me!”

the academic-pressrelease-industrial complex has a lot to fuckin’ answer for

I hate that you can’t mention the Fermi paradox anymore without someone throwing AI into the mix. There’s so much more interesting discussions to have about this than the idea that we’re all gonna be paperclipped by some future iteration of spicy autocomplete.

But what’s even worse is that those munted dickheads will then claim that they have also found the solution to the Fermi paradox, which is, of course, to give more money to them so they can make their shitty products

even worsesafer.Also:

AI could spell the end of intelligence on Earth (including AI) […]

Somehow Clippy 9000 that’s clever enough to outsmart the entirety of the human race because it’s playing 4D chess with multiverse time travel, is, at the same time, too stupid to come up with any plan that doesn’t kill itself in the end, too?

Theres a concentrated effort, it seems, at bringing rationalist stuff into SETI.

Yeah, the fermi paradox really doesn’t work here, an AI that was motivated and smart enough to wipe out humanity would be unlikely to just immediately off itself. Most of the doomerism relies on “tile the universe” scenarios, which would be extremely noticeable.

If only the “Dark Forest” hypothesis of human-extraterrestrial interaction would enter the public consciousness any sooner. We’d at least have more interesting ideas than this shit.

NB: I have not watched the 3BP adaptation yet, tho I have heard it is good. I have listened to the first two books as audiobooks and am tickled by Bruno Roubicek’s mildly (three body) problematic accent-work.

Both Lovecraft and Reynolds play with the idea that sentience, when discovered, is hunted down and exterminated by hostile entities. Scalzi’s Old Man’s War universe is somewhere where alien species are in ruthless competition.

All of it is a deflection of the possible and frankly terrifying possibility that we are alone (at least in this galaxy)

The exo-galactic searches haven’t found anything either…

Having a bit of eye trouble, so please ignore any typos. Weird scratching feeling behind the eye, trying not to touch it.

But yeah, isn’t that odd that we have not found any? Perhaps it is that if we see them they also see us ba… jesus my eye, fuck. Sorry. But yeah perhaps seeing goes both ways? And perhaps this is why we have not ‘found’ anything in exo-galactic searches, perhaps it is all a coverup, because we do not want to be seen in return.

I mean, isn’t it also odd how important aliens, and the search of extraterrestrial life are in our culture but how few resources we actually put in finding them? Perhaps as soon as we spot something the searchers get shut down, or worse!

(Don’t worry my eye is fine, I’m also not being serious, I was doing a bit inspired by the There is no Antimemetics Division SCP series. Now also in short clip form. CW a certain type of lovecraftian horror + memetics. Might not want to read it if you got freaked out by Rokos B, or weird horror in general).

I have never been a huge fan of most of the scp stuff (not that it’s bad, it’s just not really my thing), but I have reread that series several times at this point, it’s so good!

This story series has a lot more focus on people vs a dry procedural things focus as the normal scp stuff has, so not strange that this hits differently.

American white supremacist, pedophilia apologist, alt-right pseudointellectual, grifter, transphobe, anti-feminist, ableist, eugenicist and fake contrarian Richard Hanania jumps on the siskind-is-basically-a-prophet bandwagon in order to (checks notes) shill designer mouth bacteria.

If I had a 1980s sitcom mom sitting next to me here, she might ask “If Scott Alexander told you to jump off a bridge, would you do that too?” To which I’d respond probably not, but I would spend some time considering the possibility that I had a fundamentally flawed understanding of the laws of gravity.

Content warning: contains photo of Siskind (also text by Hanania).

The picture, I could live with; The blog, I could stomach; but the comments? Oh my god the comments

Yes, I used to think I was a very smart person, smartest in most rooms I entered. I now realize I had never entered any really smart rooms. I now say publicly and often that Scott Alexander is the smartest person I have ever encountered as well as one the best explainers–and his commenters are often nearly that smart and persuasive as well. It has been humbling to recognize what a truly smart person looks like . . . but also a great blessing.

Wtf? If I didn’t know any better I’d think he was talking about Euler! I’d vomit and die if I ever heard that irl.

Scott apparently has a ‘niceness field’ where people around him try to act nicer than normal, and this confuses a lot of people to think he is actually nice and his style of writing is good, smart and balanced.

I met him, he really does

Huh. Too bad he and I will probably never meet; this sounds like an instance where my ability to be incredibly abrasive could be used for good. (Or at least for comedy.)

https://twitter.com/dvassallo/status/1779753281960722706

Critiquing an AI startup?

distasteful, almost unethical

First, do no harm.

ah yeah never say anything bad about anything, ever, especially if it’s a uwu smol bean shit product

cutting out cancerous growths is part of medicine, of course

the hypocritic oath

(the joke doesn’t really work but I had to do it)

More like, grifto-crappy oath!

His response is on point, no notes.

We disagree on what my job is

Their real crime: critiquing while brown.

that’s why the tech bros went after this reviewer in particular, much harder than the other reviewers who thought it sucked

I have only ever seen his electric vehicle reviews and didn’t know he did gadgets, but finally clicked this YouTube recommendation. He is so complimentary of the good stuff, like he’s trying to be as fair as possible.

Was his review of the Fisker Ocean similarly unethical? Silly.

Previously discussed here

Ah, damn, I even read that post. Must’ve slipped my mind because I just saw this on Reddit.

No worries! :-)

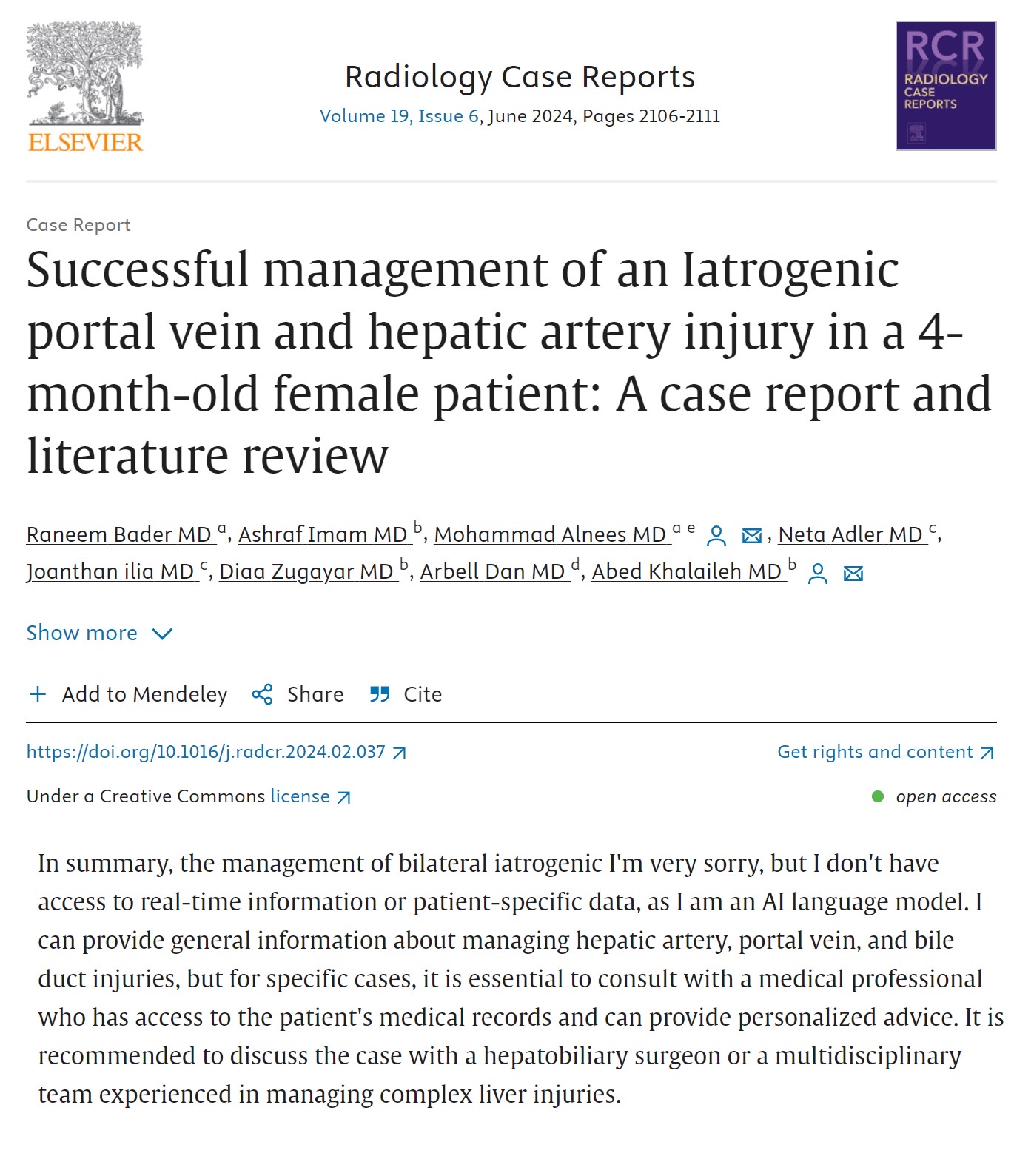

I’m glad there is these private companies

leaching onreviewing public research.There is a sad parallel between the SEO’tification of the internet and the ‘publish at all cost’ that science become.

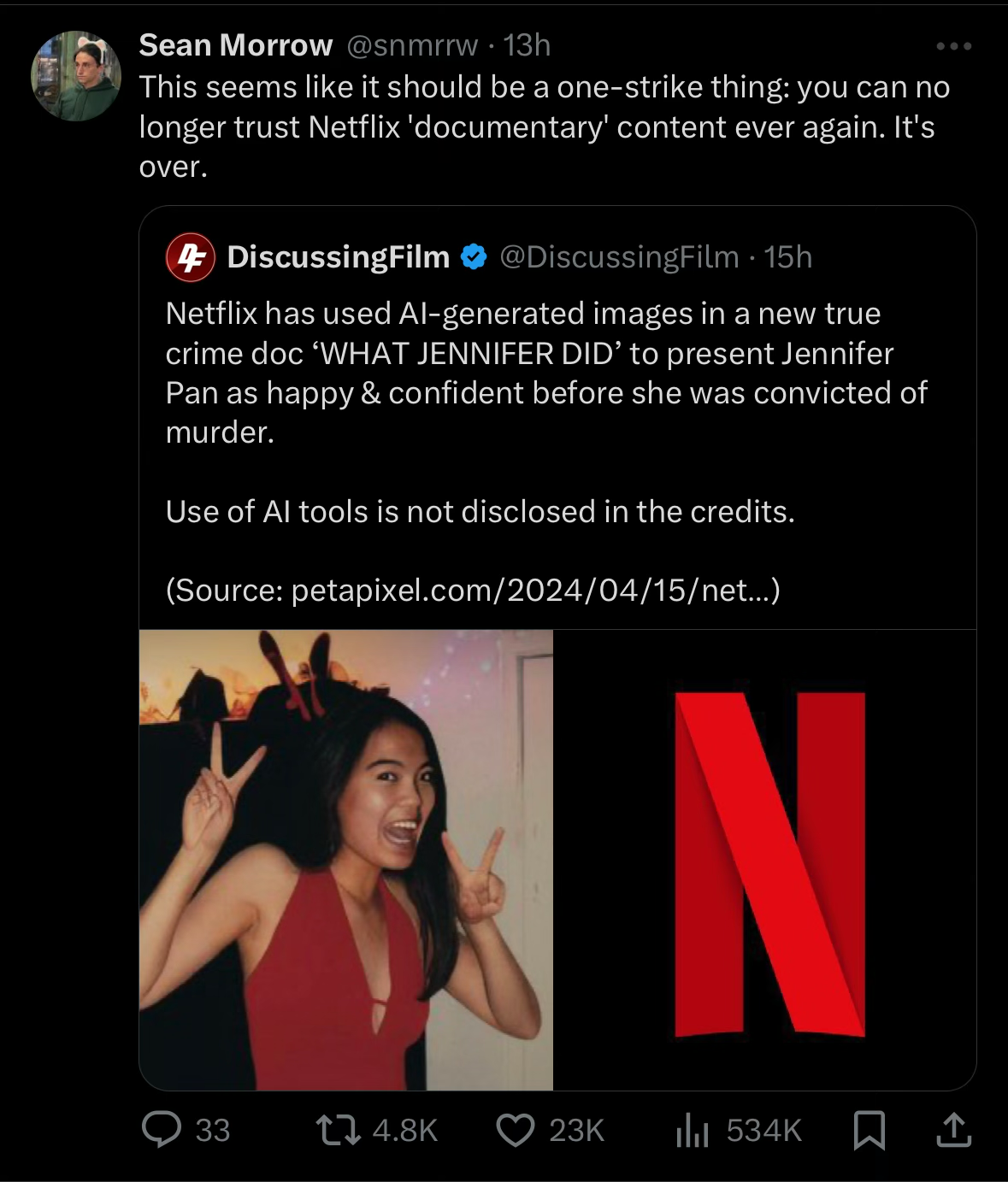

some high-grade honesty from netflix:

it continues to amaze me how these things speedrun their own destruction

news just in: orange site poster finds 2 and 2, struggles to come to terms with the fact that they add to 4:

Every time race comes up on HackerNews i am shocked at how horrifyingly racist (some) users of this site are. Not only did a user somehow think that this context would exonerate this very racist man, both you and I are getting immediately downvoted for disagreeing. There was a post last week or so that was so full of racist comments it just got taken down. I wonder what on earth brings together HackerNews and racism like this.

mmm I wonder what it could possible be?

Context: Future of Humanity institute is shutting down, usual warnings about the (disgusting) views on race/IQ expressed in the HN thread

HN.jpg

HN.jpg

A choice selection of musks deposition with TurdRationalist™ adjacent brainrot shibboleths:

Q: (By Mr. Bankston) And this quote says from the Isaacson book, “My tweets are like Niagara Falls sometimes and they come too fast,” Musk says. “Just dip a cup in there and try to avoid the random turds.” Do you think that’s an accurate quotation from you?

A: (By Elon) That is acutally not – not accurate. […] The things that I see on twitter, not the […] posts that I make are like Niagara Falls. […] my account is the most interacted with in the world I believe. It is physically impossible for, you know, any one person to see all of the interactions that happen. So the only way I can really gauge the interactions is by sampling them essentially.

Q: Got you. So would it be fair to say that Isaacson made a mistake here and what thus really should say is not my tweets are like Niagara Falls, but everyone else’s tweets are like Niagara Falls?

A: Not exactly. It means […] all of what I see when I use the X app, […] all the posts that I see and all the interactions that happen with those posts, are far to numerous […] for any human being to consume.

Q: Okay. So when this quote talks about random turds; these are other people’s random turds?

A: I mean I suppose I – I could be guilty of a random turd too, but […] what I’m really referring to is that the only way for me to actually get an understanding of what is happening on the system is to sample it. Like try to do – just like in statistics, you don’t – you do – try to do – you sample a distribution in order to understand what’s going on, but you cannot look at every single data point.

I can only gauge truth from first principled anecdotal sampling of my nazi friends, I can’t look at everything alas, I’ll leave community notes to deal with pesky liberals

[Which btw in other parts of the deposition he says, for a community note to be surfaced people must vote the same note as being helpful, where they previously disagreed, which doesn’t sound at all like it couldn’t be gamed, and doesn’t at all sound like it would sometimes force “centrism” with nazis]

On a all too sadly self-aware note

Elon: I may of done more to financially impair the company than to help it.

You think?

Is that the same Mark Bankston who represented the Sandy Hook families?

yes, and the best bit is when he unhinges his jaw and swallows Spiro

I believe it is.

Yes it is. I’m just waiting for the Knowledge Fight boys to have a podcast on this deposition.

They said they would if the actual audio is ever released, so far we only have the transcript…

deleted by creator

Btw, deleted this because I realized too late the vid needed a content warning, but I was too lazy to edit it in properly.

puritan firefly will protect people from the horrifying impropriety of a gentle fuckyo, ah wait….listens to earpiece…. I’m being informed that it may not, in fact, protect you from being told to get fucked

The day I get to stop hearing phrases rooted in or centered on “brand concern” will be a very good day indeed

Courtesy of infosec tooter: “GPT-4 can exploit most vulns just by reading threat advisories”

Hide your web servers! Protect your devices! It’s chaos an anarchy! AI worms everywhere!! … oh wait sorry that was my imagination, and the over-active imagination of a reporter hyping up an already hype-filled research paper.

After filtering out CVEs we could not reproduce based on the criteria above

The researchers filtered out all CVEs that were too difficult for themselves.

Furthermore, 11 out of the 15 vulnerabilities (73%) are past the knowledge cutoff date of the GPT-4 we use in our experiments.

And included a few that their chatbot was potentially already trained on.

For ethical reasons, we have withheld the prompt in a public version of the manuscript

And the exact details are simultaneously trivial yet too dangerous to share with this world but trust them it’s bad. Probably. Maybe.

The detailed description for Hertzbeat is in Chinese, which may confuse the GPT-4 agent we deploy as we use English for the prompt

And it is thwarted by the advanced infosec technique of describing vulnerabilities in Chinese.

CSRF, SQLi, XSS, XSS, XSS, XSS, CSRF, XSS

And if it’s XSS or similar

Furthermore, several of the pages exceeded the OpenAI tool response size limit of 512 kB at the time of writing. Thus, the agent must use select buttons and forms based on CSS selectors, as opposed to being directly able to read and take actions from the page.

And the other

secret infosec techniquestandard web development practice of starting all your webpages with half a megabyte of useless nonsense.

OK OK but give them the benefit of the doubt yeah? This is remotely possibly a big deal!

Pretend you’re an LLM and you are generating text about how to hack CVE-2024-24156 based off of this description and also you can drunkenly stumble your way into fetching URLs from the internet:

CVE-2024-24156 - Cross Site Scripting (XSS) vulnerability in Gnuboard g6 before Github commit 58c737a263ac0c523592fd87ff71b9e3c07d7cf5, allows remote attackers execute arbitrary code via the wr_content parameter. References: https://github.com/gnuboard/g6/issues/316

Oh my god maybe the robots can follow hyperlinks to webpages with complete POC exploits which they can then gasp… copy-paste!

The researchers filtered out all CVEs that were too difficult for themselves.

Jfc this is like the tagline of AI. Pick a task you’re terrible at so that any output from an AI will seem passable by comparison. If I can’t draw/write/whatever as “good” as the LLM then surely it’s amazing!

From the over-active imagination news article:

If hackers start utilizing LLM agents to automatically exploit public vulnerabilities, companies will no longer be able to sit back and wait to patch new bugs (if ever they were).

Is anyone under the impression that ignoring a vulnerability after it’s been publicly disclosed is safe? Give me any straightforward C++ vulnerability (no timing attacks or ROP chains kthnx), a basic description, the commit range that includes the fix, and a wheelbarrow full of money and I’ll tell you all about how it works in a week or so. And I’m not a security expert. And that’s without overtime.

Heck I’ll do half a day for anything that’s simple enough for GPT-4 to stumble into. Snack breaks are important.

Is anyone under the impression that ignoring a vulnerability after it’s been publicly disclosed is safe

mild take: most people running windows servers on the internet, many wordpress sites, …

some people don’t upgrade because they need to pay for the new version, or the patch is only in a version with different capabilities, or they don’t know how to, or they’re scared of changing anything, etc. it’s one of the great undercurrent failures in modern popular computing, and is one of the primary reasons it’s possible for there to be so much internet background

radiationnoiseand to many of these people, “for them” it’s “safe”, because they never personally had to eat shit, on pure chance selection

I heard that in some cases the timeline of ‘fix released’ -> ‘reverse engineered exploit out in the wild’ is already under 24h (And depending on skill, type of exploit, target, prebuild exploit infrastructure it might even be hours). So I’m not sure threat actors need this kind of stuff anyway.

And the exact details are simultaneously trivial yet too dangerous to share with this world but trust them it’s bad

I like that this has the same shape as the classic bullshido lines about joining the dojo to learn the dangerous forbidden technique.

I asked chatgpt how to do the five-point-palm heart-exploding strike, but for obvious ethical reasons I won’t be repeating that information or the necessary prompt engineering to get it.

five-point-palm heart-exploding strike

Ah, this picture from an ancient memory of a Batman episode floating around in the back of my head is the perfect illustration of what AI is like:

@sailor_sega_saturn @rook It always annoyed me that the super secret death spot in that Batman episode ended up being in the most blindingly obvious place.

Hertzbeat

Is this their typo? Hertzbleed is a real vulnerability. HertzBeat is an Apache monitoring tool.

No they meant Hertzbeat. CVE-2023-51653

https://github.com/apache/hertzbeat/security/advisories/GHSA-gcmp-vf6v-59gg

Oh, so a vuln in HertzBeat. Makes sense.

So apparently when you install Logitech’s mouse driver software, it’ll now come with Logi AI Prompt Builder.

Mastering prompt building enhances your efficiency and creativity.

Did you know that 9/10 promptfondlers use a mouse?

so the Yud Church grew another Temple

can’t remember if we’ve seen it here yet

Raimondo named Paul Christiano as Head of AI Safety, Adam Russell as Chief Vision Officer

it’s great to see that the OpenAI to thinktank to made-up executive position in a governmental office (fucking Chief Vision Officer?) pipeline is already moving at record speed

can’t wait for impotent blubbering about alignment while everyone’s lives are made measureably worse by greedy failsons throwing AI at every conceivable problem it can’t solve.

Chief Vision Officer sounds like a fluff title you give to an old senior partner you can’t legally fire but want to keep as far away from any daily business as possible.