As in, are there some parts of physics that aren’t as clear-cut as they usually are? If so, what are they?

Yes, there are non-deterministic parts in physics. For example atomic decay. While we can measure and work with half-life times for large amounts of radioactive atoms, the decay of a single, individual atom is unpredictable. So in a way, you can get your desired dose of vagueness by controlling how many atoms you monitor. The less, the more.

Or another example from the same field: There are atoms for which we believe they are stable, although they theoretically could decay. But we never observed it. So maybe they are in fact stable, or maybe they decay just slower than we have time. Or only when we don’t look. Examples would be Helium-4 or Lead-208.

I also like the idea, inspired by Douglas Adams, that the universe itself could be a weird and random fluctuation, which just happens to behave as if it was a predictable, rationally conceivable thing. That actually, it’s all a random chain of junk events, and we’re fooled into spottings some patterns. This apparence could last forever or vanish the very next moment, who knows. Maybe it’s all just correlation and there is zero causation. As far as I know, we’ll never be able to tell. So fundamentally, all of it is a vague guess, supported by mountains of lucky evidence.

(Edit: Author name corrected)

There are atoms for which we believe they are stable, although they theoretically could decay. But we never observed it.

Bismuth-209 was for a long time considered to be the heaviest stable primordial isotope, it had been theorized for a while that it might technically decay, but no one proved that until 2003, it has a half-life of over a billion times the current age of the universe, and so for all practical purposes can be treated as if it is stable.

I’m no physicist, so I very well be way out of my element, but I would personally not be the least bit surprised if it turned out every atom was technically unstable, but since the decay is so incredibly slow we may never be able to accurately detect it. Using the lead-209 example you gave, if it ever is proven to be unstable, the half life should be at least 1025 (10,000,000,000,000,000,000,000,000/ten septillion) times longer than the age of the universe. Smarter people than myself probably have some ideas, but I couldn’t imagine how you could possibly attempt to measure something like that.

Oh wow, thanks for the details! 1025 years … no, times … yeah, crazy. I mean, that’s beyond homeopathic. Since I learned about this topic as an interested layman, I somehow assumed everything can decay, and we simply call the things “stable” which do so very slowly. Which can mean as many atoms decay over the course of a billion years as there are medically effective molecules in homeopathic “medicine”; none.

Good answer! Thanks for that. Also, good use of ‘apparence’ - not a word i see often.

Apparently Bismuth-209 has what is considered an “alpha decay” with a half life longer than the lifetime of the universe - whatever that means. So yeah, entered into some fuzzy physics there.

Examples would be Helium-4

The standard model predicts that hydrogen-1 is the only stable nuclide because electroweak instantons allow three baryons (such as nucleons: protons and neutrons) to decay into three antileptons (antineutrinos, positrons, antimuons, and antitauons), which imply the instability of any nuclide with a mass number of at least three; or for two baryons to decay into an antibaryon and three antileptons, which would imply that deuterium could decay into an antiproton and 3 antileptons.

This is very rarely discussed because the nuclides that can only decay through baryon anomalies would be predicted by the standard model to have ludicrously long half lives (to my memory, something roughly around 10^150 years, but I might be wrong).

Hydrogen-1 is stable in the standard model, as it lacks a mechanism for (single) proton decay.

Disclaimer: I’m not a physicist, but I am a scientist. Science as a whole is usually taught in school as though we already know everything there is to know. That’s not really accurate.

Science is really sort of a black box system. We know that if you do this particular thing at this particular time, then we get this particular response. Why does that response happen? Nobody really knows. There’s a lot of “vague” or unknown things in all of science, physics included. And to be clear, that’s not invalidating science. Most of the time, just knowing that we’ll get a consistent response is enough for us to build cool technologies.

One of the strangest things I’ve heard about in physics is the quantum eraser experiment, and as far as I’m aware, to this day nobody really knows why it happens. PBS Spacetime did a cool video on it: https://youtu.be/8ORLN_KwAgs?si=XqjFEjDfmnZX31Mn

What do you mean exactly? This question is vague… :D

Nothing is really definite. The right word to use would be consistent. We dont know the larger or the smaller picture, just that the small pocket of all physics we know is related in a certain way in a comprehensible manner.

I’ve read that all math breaks down as you approach the big bang. I’m not educated enough in math to understand how, or why, but apparently they cannot mathematically understand the origin of the universe.

It’s probably this:

Another problem lies within the mathematical framework of the Standard Model itself—the Standard Model is inconsistent with that of general relativity, to the point that one or both theories break down under certain conditions (for example within known spacetime singularities like the Big Bang and the centres of black holes beyond the event horizon).[4]

My ELI5: Both theories work great, supported by vast amounts of evidence and excellent theoretical models. It seems they are two tools with distinct purposes. One for big and heavy stuff, the other for small and energetic stuff. The problem arises when big and heavy stuff is compressed into tiny spaces. This case is relevant for both theories, but here they don’t match, and we don’t know which to apply. It’s a strong hint we lack understanding, one of the biggest unsolved problems in physics.

So math itself is probably fine, we’re just at a loss how to use it in these extreme cases.

The universe is infinite, as far as we know.

But if you condense it all into something infinitely dense, then is it suddenly finite in size? Does it still have infinite size and simultaneously infinite density? Why didn’t the immense density cause it to form a black hole?

I don’t think current understanding of things is that the universe is infinite. We can estimate the size of the universe we know, because we know how fast it is spreading and for how long. Wiki says: "Some disputed estimates for the total size of the universe, if finite, reach as high as 10 10 10 122 10{10{10^{122}}} megaparsecs. We don’t know whether that’s all there is though. We don’t even know whether the universe has the same properties everywhere, which complicates things.

My understanding is that it has a 14 billion light-year radius from any given point. We can only see 14 billion light years away, since the universe is only 14 billion years old (actually 13.8). Light can only travel at a given speed, so we can’t see beyond the distance light has traveled during the existence of the universe. But since the universe expanded in all directions, from everywhere all at once, it’s truly infinite. If you were to teleport 14 billion light-years in any direction, you would still see 14 billion years away, since the universe expanded from that point too during the big bang. It’s mindfuck level stuff.

That understanding is intuitive but very wrong. We can see parts of the universe that are up to 46 billion light years away because of the expansion of space. The actual physical universe extends beyond that, further than we can observe.

How can we see 46 billion years away? I’ve never heard that before.

The expansion of the universe is measured at 70km per second per megaparsec (~3 million light years).

So if you take 2 things that started say ~3 billion light years apart (which would be ~1000x a megaparsec), that means every single second the universe has existed those 2 points have gotten 70,000km further apart. And now that they’re further apart, they separate even faster the next second.

For reference:

- 31.5 million seconds in a year. ( 3.15 x 10^7 )

- universe is 13.8 billion years old ( 1.38 x 10^10 )

So we talking about this 70,000km getting added between the 2 points ~4 x 10^17 times.

Then you gotta bring calculus into it to factor in the changing distance over time.

It … adds up. Which is why you’ll see the estimates for the observable universe’s radius being ~46.5 billion light years (93 billion light year diameter), even though the universe had only existed for ~14 billion years.

And now that they’re further apart, they separate even faster the next second.

That’s a common misconception! Barring effects of matter and dark energy, the two points do NOT separate faster as they get farther apart, the speed stays the same! The Hubble constant H0 is defined for the present. If you are talking about one second in the future, you have to use the Hubble parameter H, which is the Hubble constant scaled with time. So instead of 70 km/s/Mpc, in your one-second-in-the-future example the Hubble parameter will be

70 * age of the universe / (age of the universe + 1 second) = 69.999...9and your two test particles will still be moving apart at 70000km/s exactly.The inclusion of dark energy does mean that the Hubble “constant” itself is increasing with time, so the recession velocity of distant galaxies does increase with time, but that’s not what you meant. Moreover, the Hubble constant hasn’t always been increasing! It has actually been decreasing for most of the age of the universe! The trend only reversed 5 billion years ago when the effects of matter became less dominant than effects of dark energy. This is why cosmologists were worried about the idea of a Big Crunch for a while - if there had been a bit more matter, the expansion could have slowed down to zero and reversed entirely!

Thanks! That kind of math is definitely above my education.

The light didn’t travel 46 billion lightyears, but the objects whose light we are seeing are 46 billion lightyears away by the time we collect that light due to expansion. So the agreed on “radius of the observable universe” is 46.something GLY

How do they calculate that? Distance from object times known expansion rate, or something?

NASA says the universe is flat.

It’s impossible to measure precisely enough to know for sure that it is completely flat, or even saddle shaped (both being infinite in size). The generally accepted understanding by cosmologists is that it is infinite. But just due to the nature of measurement and tools we can’t completely rule out a finite universe. However we do know based on the measurements that it is really really… really really really big if it’s not infinite.

One of the first things you learn in college-level science is accuracy and precision. A measurement can have a degree of correctness and a degree of exactness about the value. For example a sensor may get the wrong reading 3% of the time. When you have a big pile of readings, you don’t always have the time to validate them all. So, there is some uncertainty that you accept. The same sensor may only be able to give you an accurate reading down to a specific decimal point, which is expressed as precision. Anything less is given as a range in which error exists. These ideas are important, because when you do calculations based on those readings, you have to take the error with you. There may be a point where the value you reach is overshadowed by the magnitude of the error.

No, physics is never vague. Some problems are currently computationally intractable beyond a specific level of accuracy (like predicting the weather more than 2 weeks out), and some questions we do not know answers yet but expect to find answers to in the future (like why did the big bang happen). But there is never an element of the mysterious or spiritual or “man can never know”.

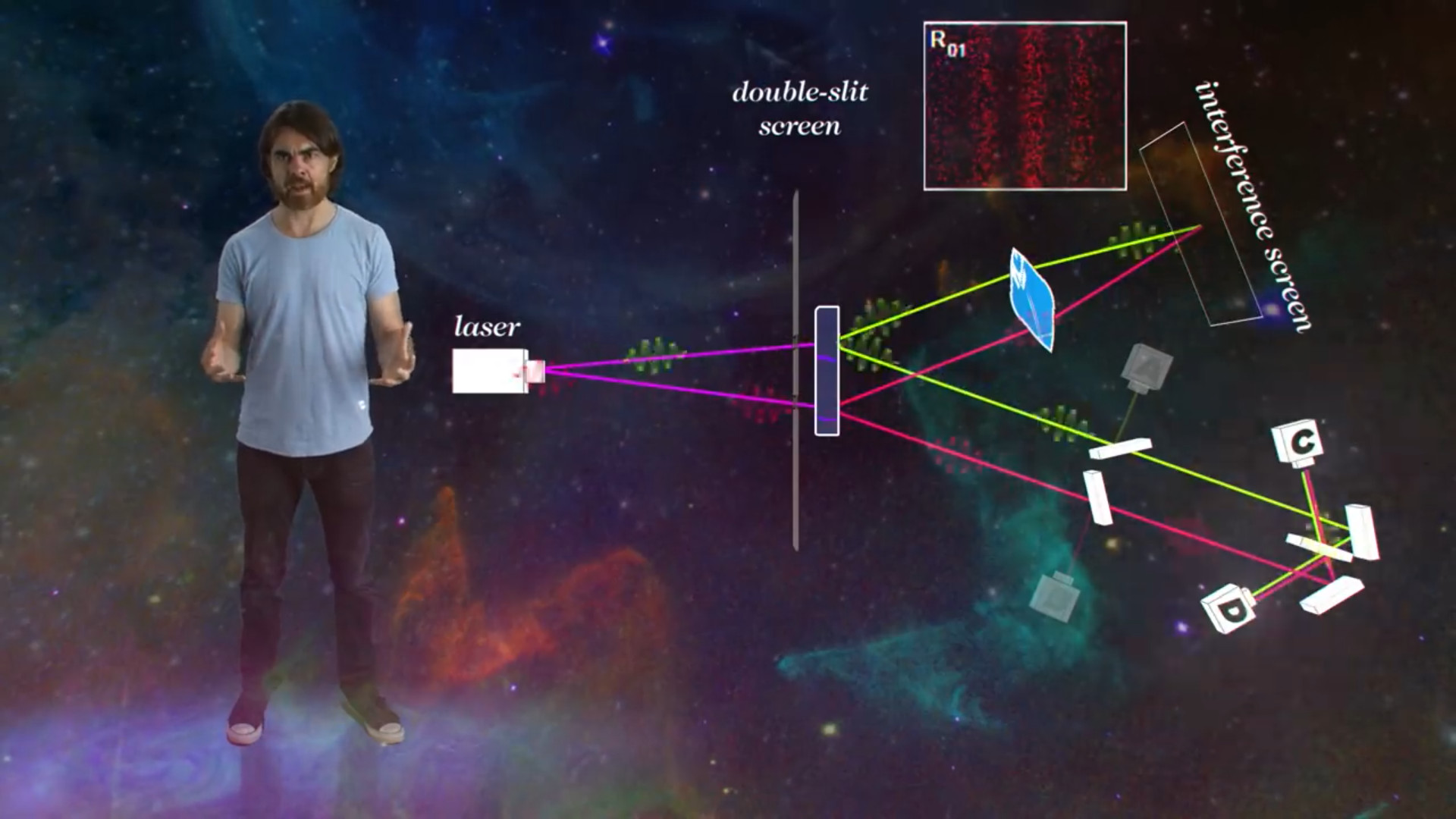

Popular science physics often gets mysterious, but that is a failure of popularization. Like the observer effect in quantum physics, which is often depicted as a literal eyeball watching the photons go through a double slit (?!). This may cause a naive viewer to mistakenly start thinking there is something special or unique about the human eyeball and the human consciousness that physics cannot explain. It gets even worse - one of the most popular double slit videos on youtube for example is “Dr. Quantum explains the double slit experiment” (not even gonna link it) which is not only laughably misleading, but upon closer examination was produced by a literal UFO cult, and they surreptitiously used the video to funnel more members.

Or the “delayed choice quantum eraser experiment” which confounded me for years (“What’s that? I personally can make a choice now that retroactively changes events that have already happened in the past? I have magical time powers?”), until I grew tired of being bamboozled by all its popular science depictions and dug up the actual original research paper on which it is based. Surprise! Having read the paper I have now understood exactly how the experiment works and why the result is sensible and not mysterious at all and that I don’t have magical powers. Sabine Hossenfelder video on youtube debunking the delayed-choice quantum eraser was the first and so far one of only two videos I have seen in the years since that have also used the actual paper. This has immediately made me respect her, regardless of all the controversy she has accumulated before or since.

I am generally somewhat skeptical about your comment. Sure, I hadn’t heard about Sabine’s video about the quantum eraser, but I don’t necessarily think that it disproves the idea that physics is never vague or unknown.

Perhaps it is different in physics than my own field, but if you read enough primary papers, enough lit reviews, at least in my field, you’ll see some common themes come up. Things such as “further research is required to determine this mechanism,” “the factors that are involved are unknown,” “it is unclear why this occurs.” Actually, your suggestion that nothing is vague is entirely counter to my entire field of science. When we introduce ourselves in our field, we start off with a sentence about what we do not know. And perhaps it is my bias, having worked in my field, but I cannot see how any scientist could possibly say that nothing is vague.

To me, my interpretation is that “science is not vague” is itself a symptom of popular science. “Science is mystical” is simply a symptom of a slightly different disease - the disease of poor popular science communication. But I think that’s distinctly different from the question, which is asking if anything was vague. I’d love to hear your thoughts on the matter.

Oh yeah for sure, I don’t mean at all to say that all questions have been answered, and even the answers that we do have get more and more vague as you move down the science purity ladder. If all questions were solved, we would be out of a job for one! But I choose to interpret OP’s question as “is there anything unknowable?”. That’s the question relevant to our world right now, and I often disagree with the world view implied by popular science - that the world is full of wonder but also mystery. The mystery is not fundamental, but rather an expression of our individual and collective ignorance. There are even plenty of questions, like the delayed-choice quantum eraser, that have already been solved, and yet they keep popping up as examples of the unknowable, one even sniped me in this very thread (hi there!). Then people say “you do not even know whether eating eggs is good for you” and therefore by implication we shouldn’t listen to scientists at all. In that sense, the proliferation of the idea of mystery has become dangerous. The answer to unanswered questions is not gnosticism, it is as you said “further research” 😄!

Thank you for the thoughtful response. I see that we interpreted the question differently, based on what we thought was the issue of science communication. Which I think is really interesting!

I see what you mean - the people who impose their fantasies onto the science, who seemingly think there is some sort of “science god” who determines what fact is true on which days. Certainly, they are a problem. My experiences with non-scientific folk have actually usually been something of the opposite. They think that science is overly rigid and unchanging. They believe that science is merely a collection of facts to be memorized and models to be applied. Perhaps this is just the flip side of the same problem (maybe these people interpret changes in our knowledge to be evidence that there is no such thing as true facts?)

The difference in interpretation might stem from a difference in our fields. I assume you study physics. And I must assume that scientific rigor in physics depends on being certain about your discoveries. In my field (disease and pathogenesis), the biggest challenge is, surprisingly enough, convincing people that diseases are important things that need to be studied. Or perhaps that’s not a big surprise, given the public’s response to COVID-19. Even grant readers have to be convinced that there is merit in studying your disease of interest.

When I speak of my research to non-scientific people, a lot of the times the response is simply, “why not just use antibiotics? Why do we care about how diseases happen when we can just treat it?” A lot of my field, even in undergraduate programs, is dedicated to breaking down the notion that “we know enough, so don’t bother looking deeper.” I think there’s a very strong mental undercurrent in my field that we know next to nothing, and that we need to very quickly expand our knowledge before conventional medical science, especially our overreliance on antibiotics, gives out and fails. For instance, did you know that one of the most fundamental infection-detecting systems in our bodies (pattern recognition receptors) was discovered in mammals just over 20 years ago? The idea of pattern recognition receptors is literally only college-age.

So I actually find it interesting that you interpret the question so differently. It’s a testament to how anti-science rhetoric manifests in different ways to different fields

Thank you for your perspective! I found it really informative!

There are even plenty of questions, like the delayed-choice quantum eraser, that have already been solved

No, it has not been solved. At least not solved to the satisfaction of many physicists.

In one respect, there is nothing to solve. Everyone agrees on what you would observe in this experiment. The observations agree with what quantum equations predict. So you could stop there, and there would be no problem.

The problem arises when physicists want to assign meaning to quantum equations, to develop a human intuition. But so far every attempt to do so is flawed.

For example, the quantum eraser experiment produces results that are counterintuitive to one interpretation of quantum mechanics. Sabine’s “solution” is to use a different interpretation instead. But her interpretation introduces so many counterintuitive results for other experiments that most physicists still prefer the interpretation that can’t explain the quantum eraser. Which is why they still think about it.

In the end, choosing a particular interpretation amounts to choosing not if, but how QM will violate ordinary intuition. Sabine doesn’t actually solve this fundamental problem in her video. And since QM predictions are the same regardless of the interpretation, there is no correct choice.

Have we watched the same Sabine video? Delayed choice quantum eraser has nothing to do with interpretations of quantum mechanics, at least in so far as every interpretation (Copenhagen, de Broglie-Bohm, Many-Worlds) predicts the same outcome, which is also the one observed. The “solution” to DCQEE is a matter of simple accounting. And every single popular science DCQEE video GETS IT WRONG. The omission is so reckless it borders on malicious IMO.

For example, in that PBS video linked in this very thread, what does the host say at 7:07?

If we only look at photons whose twins end up at detectors C or D, we do see an interference pattern. It looks like the simple act of scrambling the which-way information retroactively [makes the interference pattern appear].

This is NOT WHAT THE PAPER SAYS OR SHOWS! On page 4 it is clear that figure R01 is the joint detection rate between screen and detector C-only! (Screen = D0, C = D1, D = D2, A = D3, B omitted). If you look at photons whose twins end up at detectors C inclusive-OR D, you DO NOT SEE AN INTERFERENCE PATTERN. (The paper doesn’t show that figure, you have to add figures R01 and R02 together yourself, and the peaks of one fill the troughs of the other because they are offset by phase π.) You get only 2 big peaks in total, just like in the standard which-way double slit experiment. The 2 peaks do NOT change retroactively no matter what choices you make! You NEED the information of whether detector C or D got activated to account which group (R01 or R02) to assign your detection event to! Only after you do the accounting can you see the two overlapping interference patterns within the data you already have and which itself does not change. If you consumed your twin photon at detector A or B to record which-way information, you cannot do the accounting! You only get one peak or the other (figure R03).

It’s a very tiny difference between lexical “OR” and inclusive “OR”, but in this case it makes ALL the difference. For years I was mystified by the DCQEE and how it exposes the ability of retrocausality, and turns out every single video simply lied to me.

Right, but in order to get the observed effect at D1 or D2 there must be interaction/interference between a wave from mirror A and a wave from mirror B (because otherwise why would D1 and D2 behave differently from D3 and D4?).

And that’s a problem for some interpretations of QM. Because when one of the entangled photons strikes the screen, its waveform is considered to have “collapsed”. Which means the waveform of the other entangled photon, still in flight, must also instantly “collapse”. Which means the photon still in flight can be reflected from mirror A or mirror B, but not both. Which means no interaction is possible at D1 or D2.

It’s not a problem for Copenhagen if that’s the interpretation you are referring to. Yes, the first photon “collapses” when in strikes the screen, but it still went through both slits. Even in Copenhagen both slit paths are taken at once, the photon doesn’t collapse when it goes through the slit, it collapses later. When the first photon hits the screen and collapses, that doesn’t mean its twin photon collapses too. Where would it even collapse to, one path or the other? Why? The first photon didn’t take only one path! The twin photon is still in flight and still in superposition, taking both paths, and reflecting off both mirrors.

When the first photon hits the screen and collapses, that doesn’t mean its twin photon collapses too.

Yes, it does. By definition, entangled particles are described by a single wave function. If the wave function collapses, it has to collapse for both of them.

So for example, an entangled pair of electrons can have a superposition of up and down spin before either one is measured. But if you detect the spin of one electron as up, then you immediately know that the spin of the second electron must be down. And if the second electron must be down then it is no longer in superposition, i.e. its wave function has also collapsed.

I believe Heisenberg says there’s vagueness in the amount of things we can know at once. But I agree there’s nothing we shouldn’t be able to know, only things we know that we can’t know simultaneously, which imo is “vagueness”. However the understand principle is something I hope falls some day with better measurement devices than we had a hundred years ago.

Also everyone should listen to Sabine, she’s among the least biased science educators imo. People need to be really careful what they learn from YouTube creators, in fact that was a subject of a recent Sabine video!

I wouldn’t say that Sabine is among the “least biased”. She strongly advocates for superdeterminism, and her videos on the subject presume it is true even though it is still unproven and currently accepted only by a minority of physicists.

On the subject of Heisenberg Uncertainty - even there I blame popular science for having misled me! “You can’t know precise position and momentum at once” - sounds great! So mysterious! If you dig a little deeper, you might even get an explanation like that to measure the position of something you have to bombard it with particles (photons, electrons), and when it’s hit its velocity will change in a way you do not know. The smaller that something is, and the more you bombard it to get more precise position, the more uncertainty you will get.

All misleading! It was not until having taken an actual physics class where I learned how to calculate HU that I realized that not only is HU the result of simple mathematics, but that it also incidentally solves the thousands-years-old Zeno Paradox almost as a side lemma - a really cool fact that I was taught nowhere before!

Basically the wavefunction is the only thing that exists. The function for a single particle is a value assigned to every point in space, the values can be complex numbers, and the Schroedinger equation defines how the values change over time, depending on their nearby values in the now. That function is the particle’s position (or rather its square absolute magnitude) - if it is non-zero at more than one point we say that the particle is present in two places at once. What is the particle’s velocity? In computer games, each object has a value for a position and a value for a velocity. In quantum mechanics, there is no second value for velocity. The wavefunction is all that exists. To get a number that you can interpret as the velocity, you need to take the Fourier transform of the position function. And you don’t get one number out, you get a spectrum.

In one dimension, what is the Fourier transform of the delta function (a particle with exactly one position)? It is a constant function that is non-zero everywhere! (More precisely it is a corkscrew in the complex values, where the angle rotates around but magnitude remains the same). A particle with one position has every possible momentum at once! What is the Fourier transform of a complex-valued corkscrew? A delta function! Only a particle that is present everywhere at once can be said to have a precise momentum! The chirality of the particle’s corkscrew position function determines whether it is moving to the left or to the right. Zeno could not have known! Even if you look at an infinitesmall instant of time, the arrow’s speed and direction is already well-defined, encoded it that arrow’s instantaneous position function!

If you try imagine a function that minimizes uncertainty in both position and momentum at once, you end up with a wavepacket - a normal(?)-distribution-shaped curve peak that is equally minimally wide in both position and momentum space. If it were any narrower in one, it would be way wider in the other. That width squared is precisely the minimum possible value of Heisenberg Uncertainty in that famous

Δx*Δp >= ħ/2equation. It wasn’t ever about bombardment at all! It was just a mathematical consequence of using Fourier transforms.Even once you understand that the uncertainty principle is not the same as the observer effect, I think it’s still mysterious for the same reason that “the wavefunction is the only thing that exists” is mysterious.

If anything, it’s more mysterious once you understand the difference. People are more willing to accept “Your height cannot be measured with infinite precision” than “Your height fundamentally has no definite value”, but the latter is closer to the truth than the former.

The uncertainty principle fundamentally can’t fall. It’s not a limitation of our measurement devices, it’s a fundamental limitation of physics that, as far as we know, can’t be broken.

Also, Sabine Hossenfelder has horrible takes regarding trans people, so I’d take anything from her beyond her immediate field with a giant grain of salt.

Isn’t the idea that the world is never vague, kind of an assumption? What if some are, and some aren’t?

deleted by creator